What do you mean I wore my Laser out?

Or why monitoring everything is a good idea.

Fibre optic components provide an integral part of most networks.

At vBridge we use Fibre optic networking for most things, Fibre Channel Storage, customer and provider handovers, inter-rack tie leads.

They are fast and reliable.

But what happens when they are not?

Winding the story back this also goes back into monitoring “everything”. Whether we think we need it or not, if we can measure it, we should record stats over time.

A previous challenge is that some platforms charge a ‘per sensor’ license charge (e.g Solarwinds – just as well we don’t use that eh?) which means that teams may have to make a choice about what is monitored and what is not. This has the downside that – if you need it – historical information may not be available.

So, at vBridge we try and ensure that we monitor everything we can. We have a mix of paid and open-source systems. And in this case, we use Observium to monitor all the network hardware, all the ports and all the optics (thousands of ports).

But to actively monitor optics we need access to something called DOM or DDM.

DDM refers to Digital Diagnostics Monitoring. It is a technology used in SFP transceivers which gives the end user the ability to monitor real-time parameters of the transceivers. The monitored parameters include optical output power, optical input power, temperature, laser bias current, and transceiver supply voltage etc.

DOM refers to Digital Optical Monitoring. It is also a technology which allows you to monitor important parameters of the transceiver module in real-time. You can use DOM to monitor the TX (transmit) and RX (receive) ports of the module, as well as input/output power, temperature, and voltage. According to these monitored parameters, network technicians are able to check and ensure that the module is functioning well.

Typically, DDM and DOM are similar to each other. They are usually used together to describe the real-time monitoring function of SFP transceivers.

From: How to Understand DDM/DOM Function of SFP Transceiver

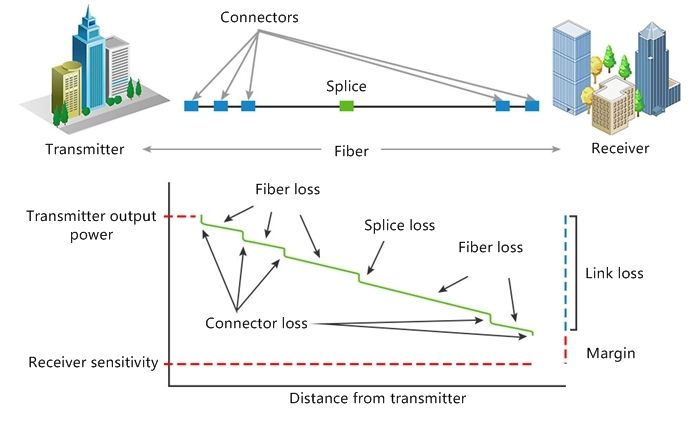

In most cases where fibre faults are suspected we would first look to a cabling issue, potential faults with fibre cables themselves, the connectors or any splices in the fibre path.

From: Understanding Link Budget and Link Loss in Fiber Optic Network

Where a link is working well - but then it isn’t later - you would normally expect external influences to be the cause. For example, someone knocking or damaging a patch lead causing physical damage and increase link loss.

We mitigate most potential problems like this up front by ensuring that we comply with physical fibre requirements like minimum bend radius, using appropriate fibre optic cable trays, and making sure we keep connectors clean by using dust caps and clearing tools. In short – don’t be rough with the fibre cables – look after them.

Another potential issue is receiving optics tailing off power. This can be due to cable problems, but also due to the receiving optics being burned out if too much power is pushed at them. i.e using 40km optics for a 10m datacenter run. So, match the power requirements to the cable length.

This leaves us with our final potential issue – and the subject of this blog. Transmit (TX) power dropping over time.

So, what’s the big deal?

Most of the time, the reducing light level doesn’t make any difference to the circuits. Optics have a wide operating range, and if, for example, we are using 10km optics on a 2km link, then power can reduce significantly before any issues occur.

However, if power and light levels reduce far enough (eroding our link budget margin) then these can lead to CRC errors or link issues. At this point the overlying protocols, in our case ethernet or fibre channel, may be impacted.

Even at this point most protocols have error correction or other mechanisms to deal with packet loss.

But since these degradations occur slowly over time, would you notice unless you had long scale monitoring of detailed optical diagnostics?

Well, we notice…

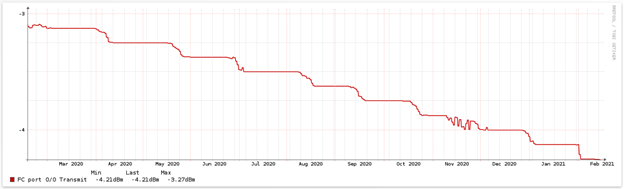

Even in this example, the ports and link itself are not showing any issues. There are no CRC errors, no discards or low-level packet loss. The degradation is only noticeable with timescales > 3 months, and 3 years of data highlights it nicely. -4.2dBm is well within the acceptable limits.

The other thing that is important when looking at transmit power is that the DDM is measuring the output before it leaves the transceiver. That means it is not subject to cable issues or joints.

But I want to head this off before it does become a problem.

The question is, why does this happen? And at what level should I draw the line?

Now that’s a little bit harder to answer. Whilst we can see TX power dropping, there is no issue on the link and no CRC errors.

Why is this optic different? It is the same age, same genuine vendor (reputable brand) and similar serial number to the optic in the adjacent port which is showing no degradation over the same time at all.

There's not a lot of readily information out there about the longevity of optics, but there is plenty of anecdotal evidence of "Yeah, that happens."

Vendors seem to equate longevity with quality (and cost), and while it seems like a fair assumption that we only use optics from reputable vendors, we are not seeing a correlation between brand, cost and reliability.

We don’t find that to be the case on our admittedly small sample size. Some optics degrade and some don’t.

Let me repeat, laser optics can and do degrade over time. Most optics use diode lasers.

The main failure mode for laser diodes is internal degradation due to crystal defects. The rate at which these defects are introduced in the crystal is proportional to the operating current and temperature. As a result of these defects, as the laser ages, its threshold current increases while its slope efficiency decreases. At a constant bias current, the optical power from the laser is found to decrease almost exponentially with age, where the time constant of the decrease is proportional to bias current. Another failure mechanism associated with lasers is facet degradation and damage. This is usually a result of intense optical power passing through the facet and an increase in nonradiative recombination at facets. The overall effect of these degradations is a reduction in facet reflectance, causing an increase in laser threshold current.

– Fibre Optics Engineering. Mohammed Azadeh.

What does that mean? It means the laser is aging. Either the silicon diode is becoming less efficient, or the ‘lens’ that the light is passing through, is becoming more opaque.

Now I am not an optical engineer. And in reality, there is little I can do when it comes to optic design.

What I can do is monitor what we have, and proactively replace optics as needed before we have a customer impacting event. It’s happened once and I don’t want it to happen again.

We automatically monitor all TX light levels that we can, and now alert through our monitoring platform when optics meet our conditions for replacement. Working out what those levels should be is a bit of a black art. Values between -4dBm and -9dBm get passed around, and the only consistent comment is “replace them when they cause issues”.

That is too late for me. We have settled on a value that gives us a good balance between expected optic life and getting in there before issues occur. I can see at-a-glance what requires further investigation.

Whether I can get the optics replaced before they fail from our vendor is another matter. Either way, the optics are swapped out and our platform continues to operate reliably. Just one of the many small things that we at vBridge do to ensure uptime for customer workloads.